I love learning about how the CPU works, so much of what we do in programming is kind of conceptual with design patterns and abstractions and the like, but at the end of the day, there's a really intensely engineered little chip that you may ignore at your own peril. Finding out how to write code that works at a high level while also functioning appropriately when the rubber hits the road is a never ending process.

If you haven't heard:

A couple weeks back I decided it's time I take the plunge and actually learn how to program.

I've written simple scripts in the past, and anything else I've needed I've been able to get chatgpt to piece something together for me, but I've gotten to the point where I don't want to have to use chatgpt.

I don't want to be a vibe coder, I want to actually know how to write professional code that will actually do the things I want it to do.

I had a couple classes in college for web dev, but other than that my experience is primarily networking and cloud stuff.

I decided to start off with learning python and I'm going through the code academy course for it now, but my concern is once I finish the course, what do I do from there?

I'm 3/4 the way through the course now and the main thing I've realized is that once I finish I will still be far from what I'd consider competent at Python.

There are so many coding principles and practices I feel like I'll still need to learn, use of libraries, and just other things that I'm not really sure how to explain as other than computer science knowledge.

I guess I'm asking, how do I continue my learning in a way that will actually help me learn? I feel like there are currently a lot of "unknown" unknowns for me, and I'd like some advice on how what to do about that.

I'm gradually working through

Automate the Boring Stuff with Python, not because I really need the material, but to determine whether I can recommend it to n00bs. I restarted with the third edition, which seems to have made some improvements, but the author still makes the peculiar choice of using

camelCase instead of

snake_case where the vast majority of Python programmers would use the latter (though Python typically does use

PascalCase for classes). Just ignore when the author does that and the poopy faggot flag on his website and it's actually quite a good book so far. (I'm now in chapter 10.) The

introduction does a lot to answer

why you would want to be a programmer:

"'You've just done in two hours what it takes the three of us two days to do.' My college roommate was working at a retail electronics store in the early 2000s. Occasionally, the store would receive a spreadsheet of thousands of product prices from other stores. A team of three employees would print the spreadsheet onto a thick stack of paper and split it among themselves. For each product price, they would look up their store’s price and note all the products that their competitors sold for less. It usually took a couple of days.

'You know, I could write a program to do that if you have the original file for the printouts,' my roommate told them when he saw them sitting on the floor with papers scattered and stacked all around.

After a couple of hours, he had a short program that read a competitor's price from a file, found the product in the store's database, and noted whether the competitor was cheaper. He was still new to programming, so he spent most of his time looking up documentation in a programming book. The actual program took only a few seconds to run. My roommate and his co-workers took an extra-long lunch that day.

This is the power of computer programming. A computer is like a Swiss Army knife with tools for countless tasks. Many people spend hours clicking and typing to perform repetitive tasks, unaware that the machine they’re using could do their job in seconds if they gave it the right instructions."

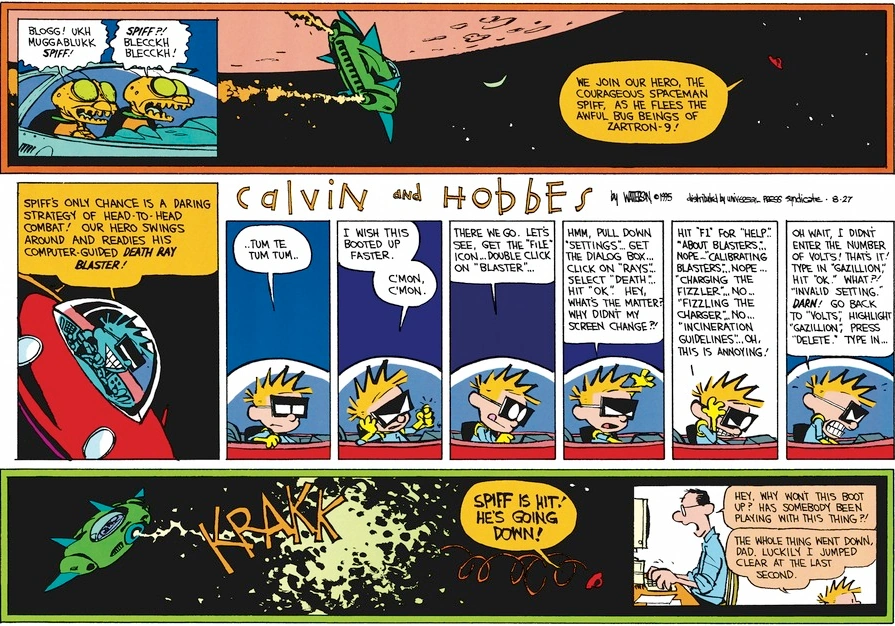

^ That actually goes to why I don't like using the open source GIS software tool QGIS, not because it's a horrible piece of garbage, far from it, but because using a zillion toolbars and panels and dialogue boxes like a normal person has become very tedious to me. It's like this:

Instead I opted to start to learn how to carry out geospatial tasks in Python and while a) for certain tasks a GUI like QGIS or ArcGIS is indispensable and b) QGIS (and ArcGIS) themselves support scripting in Python, I found just diving in with Python libraries like GeoPandas was far more efficient for my purposes.

python courses are notorious for not teaching you any higher level concept than just how to use python

You get out what you put in. I'm willing to bet there is far more information on learning data structures and algorithms out there in

dedicated street shitting language Java and even Python than what is available for Scheme or other Lisps and, on that note,

AI: A Modern Approach switched over from Common Lisp to Python, and though there has been some grousing about that decision I'd be surprised if anyone made a compelling case that the text has thereby been crippled

i think stuff like scheme is a bit less distracting because it has way less features

That's also how it got so balkanized. Why should a beginner have to find out how whatever Scheme they're using implements dictionaries if it does at all?

i would always like a newbie to do things themself and not copy paste because copying and pasting is not conducive to learning

Yes, I remember being told to write stuff down in a way that seemed pointless in middle school history class of all places and the teacher mentioned there is actual pedagogy behind what seemed like busy work at the time. I later read something to that effect which would explain why I never feel like I learn anything after copy/pasting code.